As a college student, I remember the first time I used a chatbot powered by GPT (Generative Pretrained Transformer) technology. I was struggling to understand a concept for a class, and rather than spend hours sifting through dense textbook pages, I decided to give it a try. Within minutes, I was having a conversation with a virtual assistant who was able to provide clear, concise explanations and even suggest additional resources for further reading. It was a revelation, and one that has made me a frequent user of ChatGPT technology.

However, as convenient and efficient as ChatGPT can be for research and learning, it’s important to consider the potential implications for academia and intellectual work. On the one hand, ChatGPT offers a virtual research assistant at our fingertips, able to retrieve information and provide thoughtful responses to our questions on demand. It’s like having a tutor available 24/7, offering clarifications and additional resources as needed. This can be especially beneficial for students who may not have access to traditional academic support or prefer to learn independently.

But on the other hand, the ease and accessibility of ChatGPT also raises questions about the future of intellectual work. As automation becomes increasingly prevalent, will the role of the human researcher become obsolete? Will we see a shift towards a reliance on chatbots to generate reports and papers, rather than fostering the skills and knowledge of individuals? It’s a slippery slope, and one that we should approach with caution.

In addition to the potential impact on employment, there is also the issue of academic integrity to consider. Plagiarism is already a major problem in academia, and ChatGPT technology could make it even easier for students to cheat. All it would take is for a student to feed a chatbot a few sentences from a paper and have it generate a fully fleshed-out essay. This not only undermines the credibility of individual students, but also the credibility of academia as a whole.

To address these concerns, it is important for educators to find ways to integrate ChatGPT technology into the academic experience in a way that encourages students to think critically and engage with the material. This might involve setting guidelines for the appropriate use of chatbots, or incorporating exercises that require students to use their own knowledge and problem-solving skills.

In conclusion, the rise of ChatGPT is a double-edged sword. It can bring convenience and efficiency to research and learning, but it also has the potential to compromise critical thinking skills and academic integrity. It is up to us as students and educators to use it responsibly and ensure that the pursuit of knowledge remains a vital and meaningful endeavor.

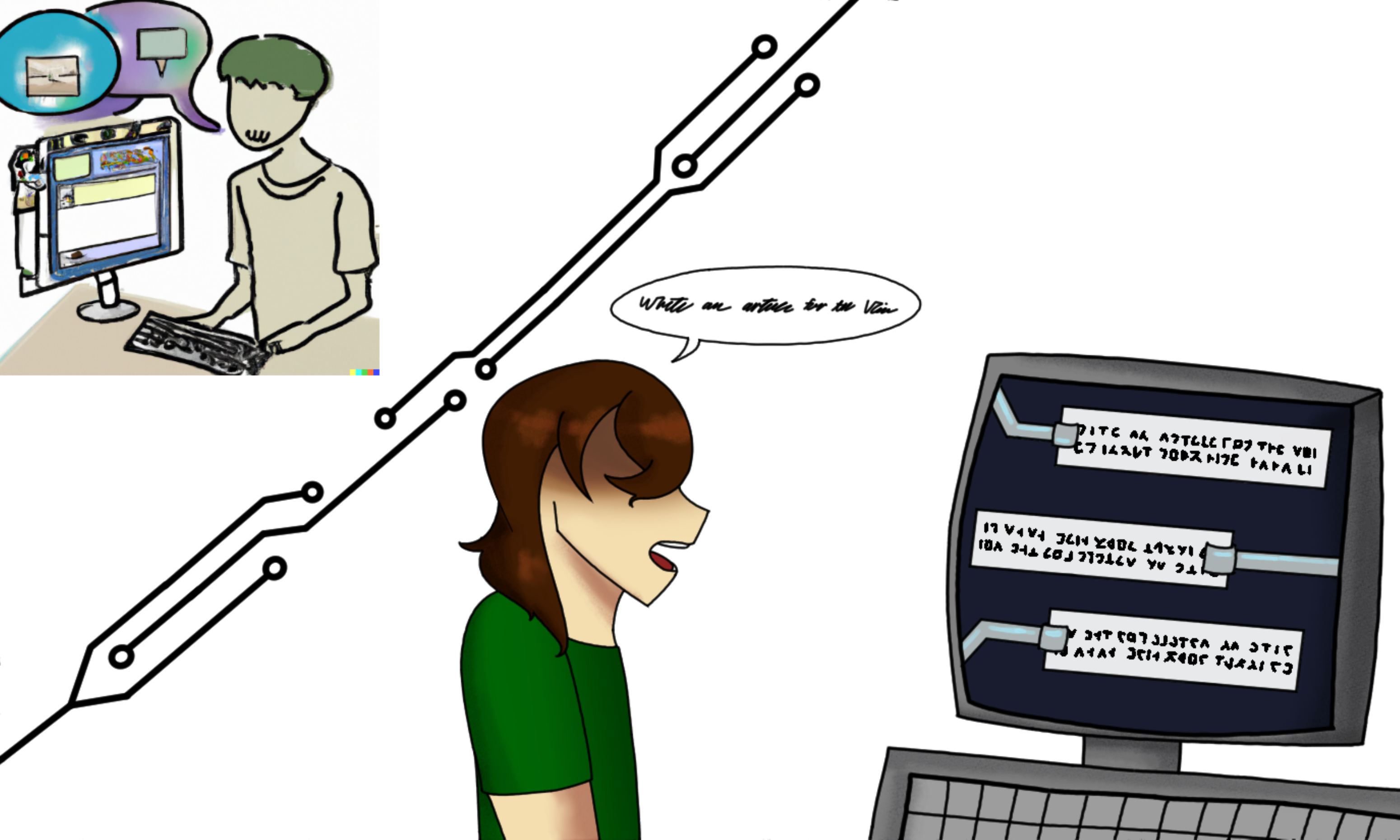

Hi readers! Surprise—everything in this article up to now was actually written not by me (Alec), but by my artificial counterpart AIec. The previous paragraphs were generated by ChatGPT with the following prompt: “Write a 500-word opinion article for a news magazine about the rise of ChatGPT and its implications for college students, academia, and the automation of intellectual work. Use varied sentence structures to seem more natural and use a metaphor and parallelisms. Start with a short anecdote.”

There’s a lot to unpack in what ChatGPT provided for us. Most notably, the article it produced, while linguistically sophisticated, is mediocre at best, especially in terms of content. Its arguments, despite being (almost surprisingly) well-elucidated, aren’t novel, and it doesn’t provide any real solutions to the problems it raises. Out of the thousands of articles being published about Artificial Intelligence (AI) right now, ChatGPT is incapable of adding anything unique—AIs rely on data to learn. ChatGPT is necessarily limited to assembling and conglomerating what has already been produced. As a result, ChatGPT is uncreative, unable to truly imitate human creation, and certainly incapable of the analysis and insight required for an excellent college-level paper.

However, ChatGPT is still in its infancy, with rumors of newer, more capable versions already on their way. Even as a prototype, the article above shows how the chatbot is more than capable of going beyond the prompt it is given. In my initial instruction, I never told it to pretend to be a college student. It decided to provide a made-up, yet reasonable, anecdote on its own based on its knowledge of the genre “news magazine articles.” This shows not just an impressive command over content reproduction, but also form. It is here that ChatGPT reveals how powerful a tool it can be. And just like the most influential innovations of the past—including the printing press, the assembly line, and the gun—it will have a tremendous impact on our future.

In regards to academia, article after article has raised the alarm about plagiarism when it comes to the bot, so much so that even ChatGPT exhibited a good grasp of the issue in our article. New York City’s Department of Education has already banned ChatGPT in public schools for fear of plagiarism. Advocates for these bans argue students will use ChatGPT to do their work, failing to learn the skills necessary to succeed in the real world. But these concerns are overblown. There are already several systems that can test articles for AI writing that can easily be incorporated into existing anti-plagiarism software like Turnitin. While schools may not have access to these programs immediately, and students will certainly be tempted to use ChatGPT until they do, AI plagiarism is not likely to represent a long-term issue.

In fact, access to AI text generation in an educational context may actually be great for accessibility. Academic language can act as a barrier to entry into academia for those who never learned its norms and intricacies, particularly for historically marginalized communities without the same access to education that builds these skills. Chatbots who understand and master these forms can level the playing field and lead to academic environments that encourage problem-solving and creativity rather than following certain writing formulas. Without having to focus on how to formulate their ideas in the “academically appropriate” way, students will have more energy to spend on content and critical analysis, portable skills that will last a lifetime.

In that way, ChatGPT may become akin to the calculator. We give students a calculator on exams because we recognize that learning how to use such a powerful tool can give them the opportunity to solve more complex and realistic problems. The same is true for ChatGPT. But since a tool means nothing unless you know how to use it, we should be teaching students how to use AI in order to engage with higher-level concepts or readings, not teaching them to avoid it.

If the implications of ChatGPT in an academic environment will be substantial, the implications in greater society will be even more profound. ChatGPT is able to draft lease agreements based on a given location and even code websites, tasks that have previously required significant training. For the vast majority of people, this technology will only make life easier—and less expensive. However, for those who make a living off of these skills, the proliferation of AI could be detrimental.

Automation and job replacement are where the trouble with ChatGPT and AI really begins. In a way, ChatGPT is like any form of automation: New technology arrives that makes human labor unnecessary, decreasing costs but also putting people out of a job. The assembly line dramatically decreased the number of necessary human workers while ultimately improving the quality of life by increasing productivity and creating cheaper products. Still, AI is different from traditional automation by threatening to automate “high-skilled” jobs that no one 20 years ago could have imagined being done artificially. In the next few decades, law firms, newspapers, or even tech companies may begin to outsource some of their work to AI. (The two designs on this spread came from the same set of instructions—one was produced by a Voice designer and the other by an AI, the ethics of which could be an article all on its own.)

However, automation is not instantaneous, nor is it equal in the communities it impacts. Research has found that Black workers are disproportionately employed in support roles rather than directive ones—more commonly hired as service workers, administrative support workers, and laborers instead of executives, professionals, or technicians. These support roles, which already have lower median pay, are far more likely to be automated. A long history of marginalization has kept Black Americans out of higher-paying directive jobs through a combination of substandard school systems, discriminatory hiring practices, implicit bias, and other compounding effects of structural racism. Additionally, a 2017 British study found that about 70 percent of the country’s jobs most at risk for automation are held by women. While those in power may be able to adapt to this automation and make it work for them, marginalized people are likely to get left behind as automation exacerbates inequality and renders certain jobs or industries obsolete.

Just as earlier automation caused massive labor shifts, this new automation will do the same. There are certain jobs AI can’t do yet. ChatGPT does not yet have the capability to perform jobs that require creativity and problem-solving skills—such as therapists, teachers, writers, developers, or marketers. However, just because ChatGPT cannot do these jobs does not mean that anyone can just pick them up. People whose jobs are replaced will need to invest significant resources into retraining—the process of learning new skills or preparing to enter a completely new career. And retraining is expensive. Many people, especially members of historically marginalized communities, may not have the wealth required for retraining in the short term. Even for the generations that will grow up with powerful AIs as part of their life, the ease with which they are normalized will vary. Curricular changes adapting to the evolving job market are most likely to take place in wealthier school districts with larger budgets rather than in those in underserved areas.

Eventually, if the power of AI continues to grow, it’s possible that even these jobs will be replaced, possibly to a point where almost every job could be automated.

The idea of a dramatic reduction in the need for a human labor force at this point may seem an absurd one, but it would certainly be a dark one. For many, it evokes images of a dystopian, even apocalyptic, world. And while the automation of nearly the entire labor force might not be in the near future, it’s hard to predict the long-term effects of an exponentially learning AI.

If AI does become a realistic alternative to human labor, our political and economic institutions will have to adjust, likely with great difficulty. Increased reliance on AI would lead to an unprecedented rise in unemployment, and governments would need to find a new way of transferring benefits accrued from cheap labor to those replaced by technology. Currently, private corporations are forced to transfer at least part of their profits to their workers because if workers don’t feel that they are making enough, they will move to another company. But AI doesn’t require a living wage. If corporations no longer require much human labor, more of their revenue can be passed on to those who own the company. In this way, mass automation will only lead to greater wealth inequality under capitalism. Thus, increased unemployment could lead to more pressure on the government to regulate private businesses and implement greater public services or even universal basic income to help people stay afloat. This would be a major departure from the U.S. government’s standard operating procedure, and the chances of a quick adaptation once automation reaches a tipping point are low.

While the above reality is all speculative, it demonstrates that the emergence of this one chatbot will have dramatic effects on our future. The truth is we have no idea how capable AI will become. Despite this, to ensure that an AI future is an equitable one, we need to begin conversations, especially for politicians with the power to regulate AI, now. Proactivity could be the difference between AI being a tool for all of humanity or a tool for those with the wealth to wield it.

AI writing tools like Chatgpt have revolutionized content creation, but they are not perfect. While they generate coherent text, they can still make errors and lack context. Complex topics may lead to inaccuracies or inappropriate suggestions. Creative tasks are a challenge for AI, as they rely on existing data. Human writing https://writinguniverse.com/essay-types/exemplification-essays/ is perfect, as AI tools are aids. Ethical considerations, such as biases in training data, must be addressed. Use AI tools collaboratively to leverage their strengths while ensuring quality content.